Top AI Music Tools for Turning Text Lyrics into Songs

Artificial Intelligence is revolutionizing music production, making it simpler than ever to transform written lyrics into complete musical compositions. This innovation breaks down traditional barriers like expensive studio time or advanced instrumental skills, democratizing songwriting for everyone. AI tools efficiently convert lyrical concepts into sonic realities, interpreting subtle intentions, emotional depth, and rhythmic qualities. This article evaluates leading AI music generators that excel at converting text lyrics into songs, exploring their features, limitations, and practical workflows to help creators at all skill levels effectively utilize these cutting-edge technologies.

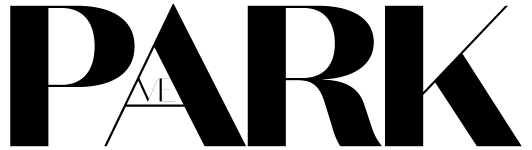

1️⃣ MakeSong — Your Lyric-to-Melody Composer

MakeSong provides a streamlined platform designed to directly transform lyrical ideas into full musical tracks. It emphasizes an intuitive interface, making it highly accessible for creators across all backgrounds. For rapid conceptualization and creative exploration, the Lyrics To Song AI Free feature offers an excellent and immediate starting point.

Capabilities

MakeSong’s AI analyzes lyrics to propose melodies and harmonies that align with the emotional tone and rhythm of the text. Users can select from a wide array of genres such as Pop, Rock, Electronic, and Ambient, along with various moods. The AI intelligently infers appropriate instrumentation, automatically adding foundational elements like basslines, drums, and core melodic structures. Its customizable AI vocal synthesis offers diverse timbres and emotional ranges, providing flexible vocal tracks ideal for early-stage demos and concept validation.

Known Gaps

While MakeSong offers impressive speed and variety, it may sometimes struggle with the nuanced emotional interpretation of highly complex lyrics. This can occasionally lead to musical outputs that feel literal or lack subtle emotional depth. Melodies generated by the AI can sometimes appear generic or repetitive, often requiring user refinement to achieve greater originality. Customization options for individual instrument parts are somewhat limited. Furthermore, while AI-generated vocals are improving, they might retain a synthetic quality that often necessitates post-processing or human re-recording for professional-grade productions.

Practical Workflow

Use a short iterative cycle: define mood targets, generate multiple drafts, select the strongest segment map, and finalize with light human edits for consistency.

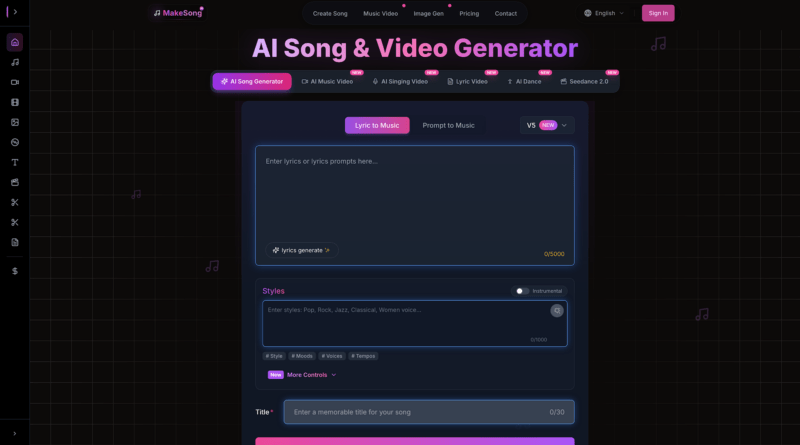

2️⃣ AIsong — Intelligent Music Creation Simplified

AIsong is a comprehensive AI Music Generator that offers advanced intelligent tools for intricate music composition. It empowers users to utilize text lyrics as the fundamental basis for diverse musical outputs, ranging from simple jingles to multi-part, complex tracks, all while maintaining a user-friendly design supported by robust algorithmic capabilities.

Capabilities

AIsong incorporates advanced Natural Language Processing (NLP) to interpret deeper lyrical context, generating contextually relevant arrangements that genuinely reflect the sentiment and narrative of the text. It provides granular control over instrument selection and sound design, facilitating the creation of multi-part song structures with dynamic intensity changes. Its sophisticated vocal synthesis technology strives for natural-sounding performances across various languages and styles, offering extensive control over pitch, timbre, and emotional nuance, essential for professional vocal tracks.

Known Gaps

Despite its advanced NLP, AIsong might still occasionally misinterpret subtle lyrical nuances, such as sarcasm or highly abstract concepts, potentially requiring manual intervention and adjustments. The extensive customization, while powerful, can present a steeper learning curve for beginners who are new to music production. Complex song generations, especially those involving numerous parameters or aiming for high-fidelity vocals, may demand substantial computational resources, leading to longer processing times. Achieving truly human-like vocals often requires meticulous fine-tuning of micro-expressions and significant post-production work.

Practical Workflow

Use a short iterative cycle: define mood targets, generate multiple drafts, select the strongest segment map, and finalize with light human edits for consistency.

3️⃣ Beatoven — AI for Royalty-Free Instrumental Backings with Lyrical Consideration

Beatoven.ai specializes in generating royalty-free instrumental music, providing custom musical foundations that perfectly complement lyrics without any legal encumbrances. Users define genres, moods, and emotional arcs to align with lyrical themes, crafting dynamic and contextually appropriate instrumental canvases.

Capabilities

Beatoven excels at crafting high-quality, emotionally resonant instrumental tracks. It offers precise control over track length and dynamic intensity curves, generating contextually appropriate backings based on user input for mood and genre.

Known Gaps

By design, Beatoven lacks direct lyric-to-melody conversion or AI vocal synthesis. Users must manually compose melodies and record or synthesize vocals separately using other tools.

Practical Workflow

The workflow guides users to create an instrumental foundation.

1. Define Lyrical Mood: Input the emotional essence, themes, and desired atmosphere of your lyrics.

2. Select Genre & Parameters: Choose a genre, adjust length, and specify dynamic changes to match your song’s progression.

3. Generate & Refine: Create instrumental tracks, then customize and refine until it perfectly complements your lyrics. Teams usually document prompt variants and compare outputs against reference mood targets.

4️⃣ Stable Audio — Generative AI for Musical Fragments and Textures

Stable Audio generates high-quality musical fragments, loops, and sound effects from descriptive text prompts using advanced latent diffusion models. It’s a versatile tool for sound designers and producers to craft intricate musical ideas and custom soundscapes by assembling AI-generated audio assets.

Capabilities

Stable Audio’s strength is generating concise musical pieces, rhythmic loops, and soundscapes from precise text prompts. Users can specify genres (e.g., ‘ambient drone,’ ‘techno’) and instruments to create unique audio assets.

Known Gaps

Stable Audio primarily generates audio fragments and textures, not complete, structured songs with predefined vocal melodies. Assembling these into a cohesive song requires significant manual effort and external arrangement.

Practical Workflow

Crafting unique audio assets for lyrical support involves detailed prompting.

1. Input Text Prompt: Describe the desired musical fragment or texture (e.g., “dreamy synth chords for intro”).

2. Generate: Produce multiple audio variations based on your prompt.

3. Integrate: Export and use the generated fragments within a larger composition.

5️⃣ Riffusion — Visual-Driven Music Generation from Spectrograms

Capabilities

Riffusion converts text prompts into spectrogram-style representations and then synthesizes audio from those visual patterns. This method enables unusual textures and experimental loops that are hard to produce quickly in conventional workflows. It is useful for ideation, sonic sketching, and discovering unexpected timbral directions during early composition stages. It is particularly useful for exploratory sound design in pre-production stages.

Known Gaps

Riffusion is less suited to structured, long-form song construction with stable arrangement logic. Output can be less predictable than traditional generators, and creators often need multiple passes to reach production-ready continuity. It works best as an exploratory layer, not always as a complete end-to-end replacement for controlled composition pipelines. Additional manual editing is often needed for narrative continuity in long-form work.

Practical Workflow

This unique approach involves visual and textual iteration.

2. Visualize & Generate: The AI creates a spectrogram and then the corresponding audio.

3. Iterate: Refine prompts to explore different sonic outcomes.

4. Curate Segments: Select the strongest fragments, then assemble and polish them in a DAW for final structure and mix consistency. Teams usually document prompt variants and compare outputs against reference mood targets. A final QA pass in a DAW improves structure and level consistency.